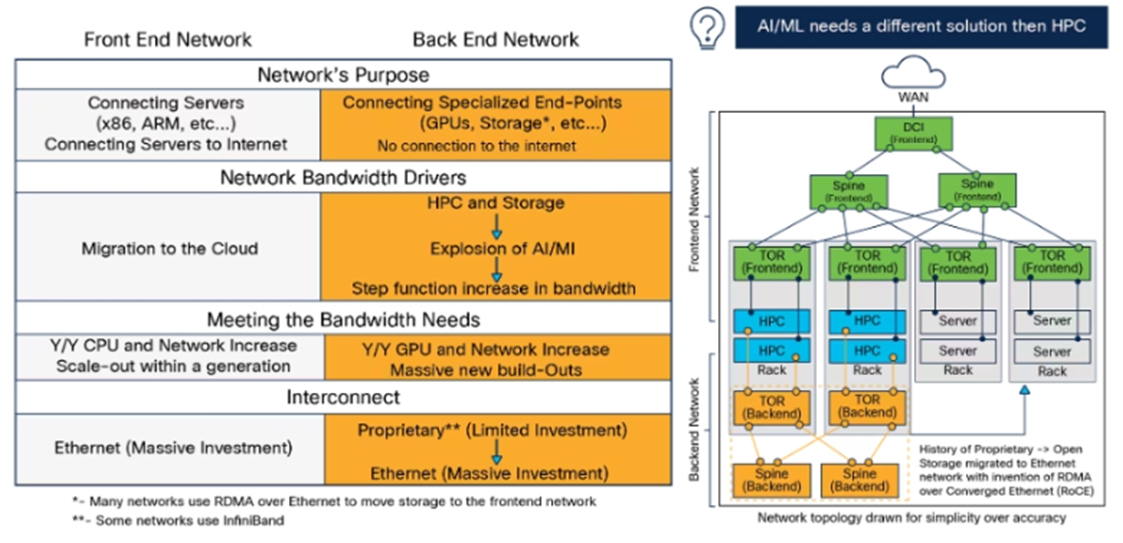

Generative AI is driving the demand for cloud computing, as more enterprises want to train and run large AI applications in the cloud. However, only a fraction of the existing cloud infrastructure can handle the massive data and computation needs of artificial intelligence (AI). Hyperscalers like Google, Microsoft, and Amazon are investing in new AI data center architectures, which are different from the traditional cloud and high performance computing (HPC) architectures. These new architectures have more GPU-based servers and back-end networks, which offer much higher bandwidth and connectivity than the front-end networks. The schematic below shows that different networking topology is needed to run AI/machine learning (ML) applications with back end network needing most of the upgrade (Exhibit 1).

Exhibit 1: Front End and Back End Network

Source: Cisco

The network architecture today is mainly designed for east-west traffic, which is the data transfer between servers within or across data centers. East-west traffic is affected by the degree of virtualization and containerization of the applications and infrastructure, which makes them widely distributed and scalable. North-south traffic, which is the data transfer between servers and external locations, is influenced by the number of users and apps. Generative models, which are a type of AI application, require more bandwidth for east-west traffic, as they involve large amounts of data and computation. However, this can cause congestion and packet loss at the network switches, which leads to latency and interruption of the model training or inference.

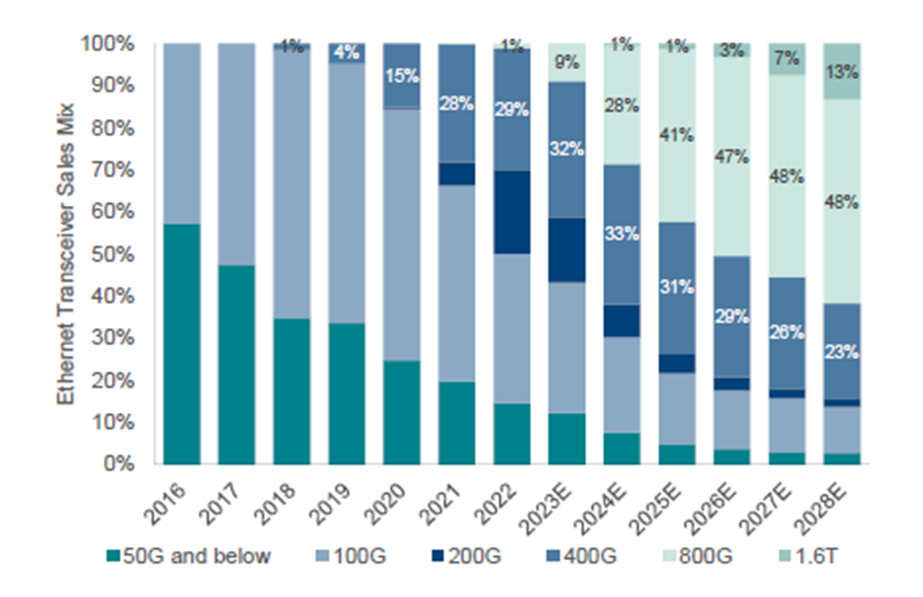

The problem of increased latency with more networking traffic can be solved by various methods, similar to how road traffic can be improved - by adding faster, smarter, extra, or smoother lanes. Hyperscalers are using all these methods to cope with the higher bandwidth requirements and lower latency expectations. Faster lanes involves upgrading networks to higher speeds, such as 400G, 800G, or 1.6TB, which can handle more data traffic, especially from GPU workloads. Smarter lanes means using advanced load balancing, which can redirect or pause traffic to avoid congestion and packet loss. Extra lanes means adding more switches, which can provide more options for data transfer. Smoother roads means using InfiniBand, for bigger packet sizes and less utilized networks. InfiniBand can also be more costly and less common than other standards.

Component companies in the supply chain benefit the most from adopting faster speeds, as they can charge higher prices and face less competition for their products. For example, market forecasts for Ethernet transceiver (a crucial component) show a big increase in demand for 400G and above speeds in 2024 (Exhibit 2).

Exhibit 2: Ethernet Transceiver Sales Mix by Speed

Source: Light Counting, BNP Paribas Exane

In our strategies, we have invested in various companies that make networking gear, semiconductor, and optical components. These companies will benefit from the networking upgrades that are needed by the growing use of Generative AI.

This information is not intended to provide investment advice. Nothing herein should be construed as a solicitation, recommendation or an offer to buy, sell or hold any securities, market sectors, other investments or to adopt any investment strategy or strategies. You should assess your own investment needs based on your individual financial circumstances and investment objectives. This material is not intended to be relied upon as a forecast or research. The opinions expressed are those of Driehaus Capital Management LLC (“Driehaus”) as of Decmeber 2023 and are subject to change at any time due to changes in market or economic conditions. The information has not been updated since December 2023 and may not reflect recent market activity. The information and opinions contained in this material are derived from proprietary and non-proprietary sources deemed by Driehaus to be reliable and are not necessarily all inclusive. Driehaus does not guarantee the accuracy or completeness of this information. There is no guarantee that any forecasts made will come to pass. Reliance upon information in this material is at the sole discretion of the reader.

Other Commentaries

Data Center

By Ben Olien, CFA

Driehaus Micro Cap Growth Strategy March 2024 Commentary with Attribution

By US Growth Equities Team